Somewhere inside your organization right now, an AI agent is making a decision. Not being asked to make one. Not waiting for a human to review the options. Just making one, on its own, at machine speed, as part of a workflow that may touch three other agents, two enterprise systems, and a customer record with protected data inside it. By the time you finish reading this sentence, that decision has already been made.

This isn't a warning about what's coming. It's a description of what's already happening.

As of 2026, virtually every enterprise that has moved beyond AI experimentation into production deployment is running agents in the wild. And that's most of them. AI agents are no longer a pilot program. They are operational infrastructure, embedded in sales, operations, HR, finance, customer experience, legal, and IT workflows. And they are multiplying faster than any enterprise technology that came before them.

So the question isn't whether your organization is using AI agents. The real question, the one that will define competitive outcomes over the next three years, is whether anyone is actually managing them.

Not monitoring them. Not logging them. Managing them. With governance, accountability, ROI visibility, and compliance controls. With the ability to say, precisely and not approximately, what your agents are doing, why they're doing it, and whether it's generating the value you're paying for.

For most enterprises today, that answer doesn't exist. Not because organizations lack ambition. But because they've poured resources into building and deploying agents while treating governance as something to figure out later, once the agents prove their value. What they're discovering, often at the worst possible moment, is that the absence of a management layer is exactly what prevents agents from proving their value. And it's what turns an AI investment from a strategic advantage into an operational and regulatory liability.

"Every enterprise has a strategy for building AI agents. Almost none have a strategy for managing them. That gap is about to become the most expensive oversight in enterprise technology history."

What follows is an examination of why that gap exists, how wide it already is, and what it takes to close it, before a regulator, an auditor, or an operational failure makes the need impossible to ignore.

The scale of AI agent deployment in 2026: Why agent management can no longer wait

It's easy to underestimate how far the agentic AI transition has already progressed. As of early 2026, enterprise deployments of AI agents, autonomous systems capable of reasoning, planning, tool use, and multi-step execution, have moved decisively from pilot projects into production operations. Gartner projects that by 2028, the average Fortune 500 enterprise will have over 150,000 agents in use, up from fewer than 15 in 2025. That's not a gradual curve. That's a vertical line

And the investment is following the same trajectory. Gartner projects $550B of global AI spend will flow to agentic orchestration and Agent Management Platforms by 2029, representing roughly 40% of total enterprise AI investment.

Read more on Agent Management Platforms here - Beyond Agent Sprawl: The Rise of AI Agent Management Platforms

But scale without structure creates a specific kind of problem. And enterprise history is pretty consistent on this point. Every major technology transition, mainframes to client-server, client-server to cloud, monoliths to microservices, has followed the same arc. Rapid adoption first. Then a governance reckoning. Then billions were spent on the management platforms that should have been built earlier. The organizations that invested early captured the advantage. Those who waited managed the consequences.

We are at that inflection point right now with AI agents. The question is which side of it your organization will be on.

The pattern is clear enough to be predictive. In 2015, enterprises were deploying microservices at pace without adequate orchestration infrastructure. By 2018, service mesh platforms like Istio, Linkerd, and Consul had become mission-critical investments because the complexity of ungoverned microservice sprawl had become impossible to manage. The agent management moment is arriving on the same trajectory, compressed into a shorter timeline because agents operate at higher stakes. They make decisions, not just API calls.

The organizations feeling this most acutely are the ones that started earliest. They have the most agents deployed across the most frameworks, and the clearest view of how fast the governance gap compounds without an Agent Management Platform in place. Their operational pain today is the preview of what every enterprise will experience within quarters, not years.

The AI agent ROI problem: Why enterprises cannot measure what they are building

Not approximately. Not with reference to satisfaction score improvements that haven't been independently validated. Not with cost estimates assembled from three different cloud bills and a department budget spreadsheet. Precisely: agent by agent, workflow by workflow, with clear attribution of cost to outcome.

For the overwhelming majority of organizations, the honest answer is no. And that is not a small problem. It is a capital allocation failure that is compounding with every new agent deployment.

Only 15% of IT leaders strongly believe they have the right governance models in place to manage AI agents in their enterprise applications. Meanwhile, organizations without consistent AI governance are over three times less likely to report high value from their AI investments. (Gartner, 2025)

Only 22% of organizations report confidence in their ability to measure ROI on AI investments at the project level, according to a 2025 Deloitte AI survey. At the individual agent level, that number falls further still.

The dysfunction this creates is predictable. Organizations over-invest in high-visibility agents that perform impressively in demos but underdeliver operationally, because nobody is measuring operational performance against business outcomes. They under-invest in high-value agents that quietly generate returns but lack organizational champions in the budget process, because the returns are invisible. And they fail to deprecate underperforming agents, accumulating financial and technical debt that compounds over time, because without a measurement infrastructure, there is no defensible basis for the deprecation decision.

Marketing reports that the customer service agent improved satisfaction by 40%. Sales says the prospecting agent is generating qualified leads. Operations claims the process automation agent has cut cycle times by a third. Each number is self-reported by the team that owns the agent. The token costs are distributed across cloud bills. There is no unified denominator. The CFO, sitting across from all of this in a budget review, has no way to validate, compare, or optimize any of it.

When did 'we believe it's working' become an acceptable standard for a nine-figure technology investment?

The enterprises that will define the next era of competitive advantage are those that treat agents as the capital assets they are, with performance management, ROI attribution, utilization benchmarking, and continuous optimization cycles. That discipline requires a management layer purpose-built for agents. It cannot be assembled from monitoring dashboards and intuition.

Why traditional it governance cannot manage AI agents

The instinct, and the wrong one, is to assume that existing enterprise infrastructure can be extended to govern AI agents. SIEM platforms. Cloud cost management tools. API gateways. Observability suites. These are mature, well-funded capabilities. Surely they can be configured to monitor agents as well.

They cannot. And understanding why is essential to understanding what needs to be built.

Traditional software systems are deterministic. Given the same input and state, they produce the same output. Every monitoring, compliance, and access control framework that enterprises have invested in for the last twenty years is built on this assumption. Agents are not deterministic. They are probabilistic reasoning systems: they make decisions, learn from interactions, delegate tasks to other agents, and exhibit behavior patterns that look like anomalies to conventional monitoring but may actually represent optimization. An agent shifting its reasoning patterns over time is not malfunctioning. It may be adapting. It may also be accumulating bias. Without infrastructure designed to tell the difference, organizations cannot know which.

The governance gap at a glance

The implications cascade. A compliance audit tool designed for deterministic systems catches violations after the fact, after the batch processing cycle, after the human review, after the weekly report. An agent operating at business speed can generate hundreds of decisions per minute. By the time a traditional audit detects a compliance gap, the agent has already made thousands of additional decisions downstream. The damage has compounded before the audit is complete.

Up to $10,000/month in runaway costs can be generated by a single unmanaged AI agent use case in enterprise environments, with uncontrolled agentic loops identified as a leading cause of spiraling cloud spend. (Gartner, February 2026)

Multi-agent accountability is a related but distinct failure mode. Modern enterprise agent deployments are not single agents; they are networks, with orchestrator agents delegating tasks to specialist agents, which in turn call tools, APIs, and other agents. When an outcome causes harm, the decision chain runs through four, five, or six agents. Traditional identity management was designed for humans. It tracks who authenticated. It does not track which agent in a delegation chain made the probabilistic inference that drove a consequential decision. That attribution gap is not a configuration problem. It is an architectural one.

When something goes wrong in a multi-agent workflow, can your organization trace exactly which decision, by which agent, triggered the outcome? If not, what does that mean for your liability posture?

AI agent compliance and regulatory risk

For anyone still treating agent governance as a future concern, the regulatory environment has removed that option. The EU AI Act entered into application in August 2024. It is not aspirational language. It is enforceable law, with provisions that directly implicate autonomous agent deployments in high-risk categories: HR, financial services, healthcare, customer-facing operations, and critical infrastructure.

The Act requires, among other obligations: documentation of decision-making processes, bias testing and mitigation, human oversight mechanisms, incident reporting frameworks, and transparency requirements for automated decisions affecting individuals. Every organization that deploys autonomous agents in regulated contexts is now carrying compliance obligations that most of its current governance infrastructure cannot satisfy.

Non-compliance penalties under the EU AI Act reach €30 million or 6% of global annual turnover for the most serious violations, and the act's extraterritorial scope means it applies to any organization whose agent operations affect EU residents, regardless of where the organization is headquartered.

GDPR's right-to-explanation principle, which has been in effect since 2018 and is now being enforced with increasing sophistication, requires organizations to provide meaningful information about automated decision-making affecting individuals. That obligation cannot be satisfied by an organization that cannot reconstruct the reasoning chain of its own agents. HIPAA audit control requirements apply to any agent processing protected health information. SOX controls extend to agents participating in financial reporting workflows.

When your compliance team asks for a full audit trail of every consequential decision an agent made last quarter, across all your frameworks, all your cloud environments, what will they receive?

The enforcement trajectory is clear. Regulators are building AI competency faster than most enterprises are building AI agent governance infrastructure. The organizations that implement an Agent Management Platform before an inquiry will experience compliance as a competitive differentiator. The ones that implement it after will experience it as a crisis response, paying remediation costs that dwarf the original investment in governance.

"The EU AI Act does not transfer liability to AI vendors. It places it squarely on the enterprises that deploy AI systems, and it expects them to prove governance, not claim it."

AI agents as digital workers: The management framework enterprises are missing

The most important conceptual shift in approaching agent governance is also the simplest: stop thinking about agents as software to be monitored and start thinking about them as digital workers to be managed. The infrastructure required follows directly from that reframe.

When an organization scales from ten employees to a thousand, it does not simply provision more laptops. It implements HR systems, performance management frameworks, onboarding processes, productivity benchmarks, compliance training, reporting hierarchies, and compensation structures tied to demonstrated value. It builds the organizational infrastructure to ensure that its workforce operates effectively, within policy, and in alignment with business objectives.

An enterprise deploying its first hundred AI agents faces an identical set of management requirements, and historically has had none of the corresponding infrastructure. The analogy extends precisely to governance: just as human workers need different oversight depending on seniority, role, and risk exposure, agents need governance frameworks calibrated to their function, capability, and the sensitivity of the decisions they make. A customer data processing agent requires fundamentally different compliance monitoring than an internal scheduling agent. A multi-agent orchestration workflow handling financial transactions requires different oversight than a single-task document summarizer.

Would any organization deploy a thousand new employees with no performance management system, no compliance training framework, and no ability to attribute their work to business outcomes? Then why are we doing exactly that with AI agents?

The workforce management framing also clarifies what has been missing from the point solutions dominating the current market conversation. An agent observability tool is analogous to a biometric time-tracking system: it tells you the agent showed up and what it did, but nothing about whether it did it well, whether it was worth the cost, or whether it operated within appropriate boundaries. An identity management system is the equivalent of an access badge, necessary but nowhere near sufficient for organizational governance.

What the agentic era requires is the equivalent of a complete HR and workforce management platform, redesigned from the ground up for autonomous digital workers. That is the Agent Management Platform category, and it does not yet exist at scale in most enterprises.

Key capabilites of agent management platform:

Given the scope of the challenge, it is worth being precise, because a significant portion of what is currently being marketed as agent governance falls well short. Organizations making procurement decisions in this space need a clear standard against which to evaluate claims.

Platform-grade Agent Management is not agent monitoring with a better dashboard. It is not cloud-native identity management extended to non-human principals. It is not a compliance checklist bolted onto an existing observability tool. It is a unified system that treats the management of digital workers with the same rigor that workforce management platforms apply to human ones, across five essential capability dimensions.

The critical architectural requirement is that these five capabilities function as a unified system, not a collection of modules. The insights from each dimension must inform real-time decisions in the others. Reasoning drift detected in the governance layer should immediately update performance metrics. ROI data should inform escalation routing priorities. Financial attribution should influence policy enforcement decisions. Token usage patterns should surface in investment intelligence.

A platform that achieves this integration becomes the operational nervous system of enterprise agent deployments, not an additional monitoring layer, but the infrastructure that makes agent operations sustainable and scalable. The switching costs of that infrastructure, once embedded, are enormous. The value it generates, compounded over the lifecycle of an expanding agent estate, is transformational.

According to Forrester, enterprises that implement unified AI governance platforms report 3.2x higher confidence in their AI investment decisions and 2.7x faster response to compliance requirements than those relying on point solutions, a gap that widens as agent deployments scale.

By 2030, Agent Management Platforms will dominate 80% of all successful agent-to-agent interactions and absorb or displace fragmented AI tool providers, capturing over 60% of AI's compounded value. (Gartner, October 2025)

If your current approach cannot tell you which agents are generating value, which are creating risk, and which should be deprecated, is it actually governance, or is it theater?

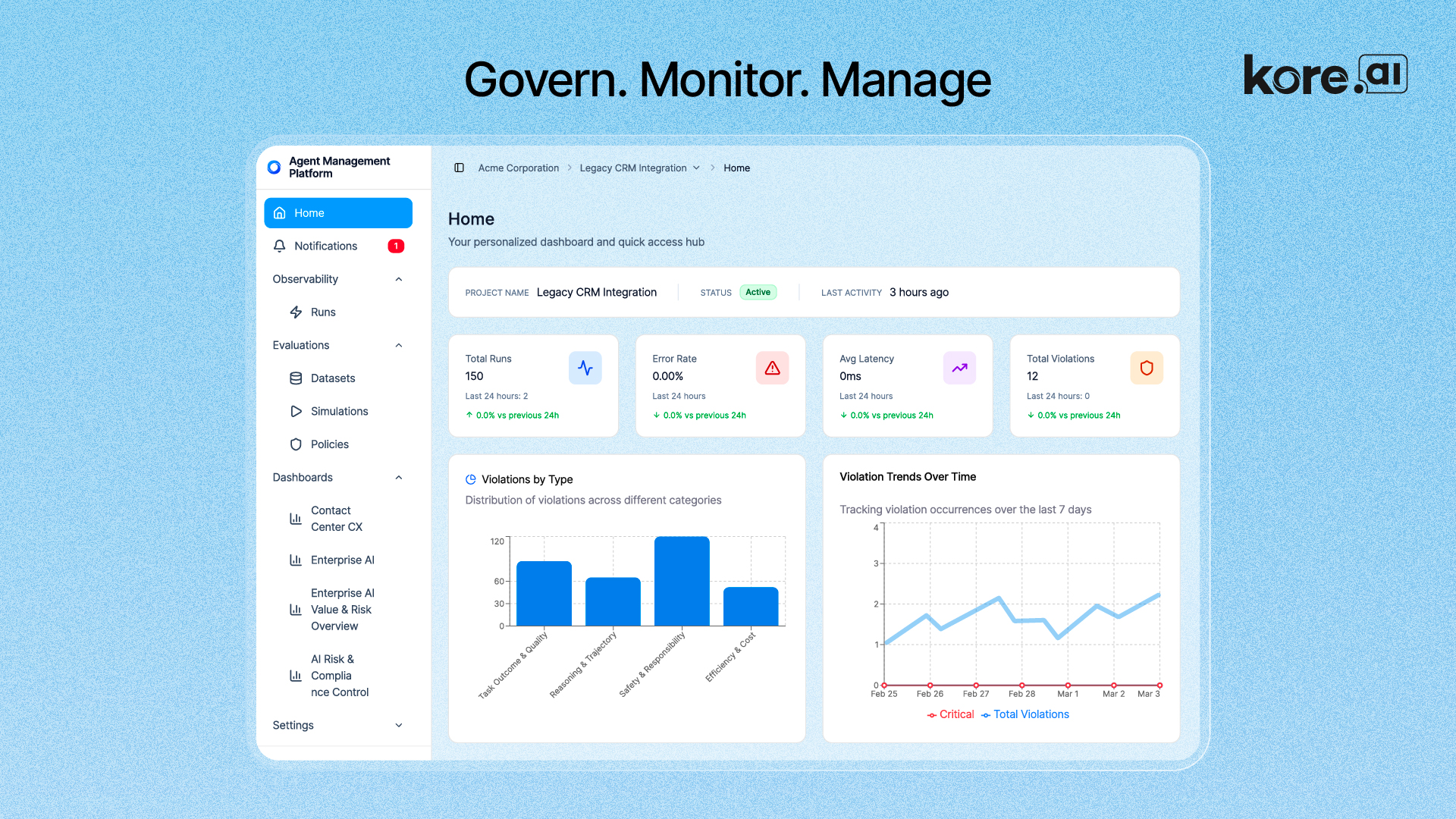

Kore.ai Agent Management Platform: Built for enterprise-scale AI governance

In a market crowded with point solutions, Kore.ai has taken a structurally different position: building an Agent Management Platform that treats AI agents as the enterprise's most consequential new class of digital worker, and constructing the governance infrastructure accordingly. The result is not an observability add-on or a compliance checklist. It is a centralized control plane that unifies policy enforcement, real-time oversight, financial attribution, risk management, and business value measurement across every AI agent in an enterprise, regardless of which platform, framework, or vendor built them.

That last point is critical. Kore.ai's AMP is explicitly designed to govern agents built on third-party systems: LangGraph, CrewAI, AutoGen, Google ADK, AWS AgentCore, Microsoft Foundry, Salesforce Agentforce, and any other framework an enterprise has deployed. This vendor-agnostic architecture means organizations are not required to rebuild their agent estate on Kore.ai tooling to gain governance. They can plug their existing agents into the AMP's control plane and begin measuring, governing, and optimizing from day one.

What the platform actually does: Four problems, four answers

Kore.ai's AMP is organized around four systemic challenges that emerge when enterprises scale AI agents beyond pilots. Each has a specific architectural response.

Operational blind spots

As agent estates grow, teams lose visibility into how agents are performing. Prompts, model responses, tool calls, and workflow decisions become opaque. The AMP's Observability layer provides end-to-end tracing with full session traces, execution timing, and step-level visibility across single and multi-agent conversations. Every agent interaction is captured: model invocation, tool usage, reasoning steps, intermediate decisions, and final outputs. Telemetry is correlated across agents, models, tools, and data sources and surfaced through pre-built dashboards with real-time anomaly detection and event-triggered alerting. Teams can answer the questions that matter: why did this agent make that decision, which model was used, where is latency concentrated, and what is the cost per interaction.

Uncontrolled AI spend

Token costs grow rapidly with AI adoption, expensive models get overused, and cloud bills provide no granular attribution. The AMP's financial intelligence layer delivers detailed cost tracking across agents, models, and business units. Spend is attributed at the level of individual agent interactions, correlated against performance outcomes, and surfaced through role-based dashboards. The platform identifies redundant agents, underperforming use cases, and inefficient model routing, giving operations teams the specific data needed to optimize spend without sacrificing speed or quality. Cost per interaction is trackable, comparable, and actionable.

Regulatory and policy risk

Governance policies exist in most organizations, but are inconsistently enforced as agent deployments scale. The AMP's Policy Management layer centralizes governance across all AI initiatives, continuously monitoring every agent against defined compliance rules. Real-time alerts flag policy breaches before they escalate. Audit logs are immutable, timestamped, and user-attributed, generating compliance evidence that satisfies GDPR, HIPAA, SOC 2, PCI DSS, and emerging EU AI Act requirements. The platform moves governance from a quarterly audit exercise to a continuous, automated enforcement function embedded in production operations.

Unclear business impact

Most organizations struggle to demonstrate that AI is actually delivering measurable value. The AMP's ROI measurement engine directly links agent activity to business outcomes: revenue impact, cost savings, productivity gains, and error rate reduction. ROI calculations are based on five operational baselines: average manual cost per task, average revenue per task, average manual handling time, monthly FTE capacity, and pre-AI error rate. Against these baselines, the platform computes post-AI telemetry and generates precise ROI figures by agent, by workflow, and by business unit, giving leadership the evidence needed to prioritize the initiatives that drive the most value and deprecate the ones that do not.

The technical architecture: What is actually under the hood

Understanding the AMP requires understanding the agent lifecycle it governs. Kore.ai structures this across five stages: Build, Test, Deploy, Monitor, and Optimize. The platform provides native tooling for each stage while also accepting agents from external systems at any stage via secure telemetry ingestion. For enterprises building on the Kore.ai Agent Platform, the full lifecycle is managed within a single environment. For enterprises managing third-party agents, the AMP connects through standard protocols and APIs.

Multi-Agent Orchestration is the foundational capability. The platform supports three orchestration patterns natively: Supervisor (an orchestrator agent breaks complex tasks into subtasks, delegates to specialized agents, and verifies outputs), Adaptive Agent Network (agents dynamically coordinate based on context and performance signals rather than fixed workflows), and Custom (enterprises define their own orchestration logic for use cases that do not fit standard patterns). Agents communicate through A2A (Agent-to-Agent) protocols and interact with enterprise tools through MCP (Model Context Protocol), enabling interoperability across heterogeneous multi-agent environments without hard-coded integrations. Each agent can hold both short-term session memory and long-term persistent memory, enabling continuity across interactions and more contextually accurate task execution.

The AI Engineering Toolchain is where pre-production quality control happens. Prompt Studio provides a structured environment to design, test, version, compare, and govern prompts as first-class enterprise assets, with role-based governance and a library of 75+ pre-built prompt templates. The Model Hub provides unified management across commercial models (GPT-4, Claude, Gemini), open-source models from Hugging Face, fine-tuned variants, and bring-your-own models, all with consistent controls and one-click activation. Model Factory enables systematic fine-tuning with dataset management, test validation, hardware optimization, and built-in review controls. Evaluation Studio combines model-level and agentic evaluation in a single framework: model outputs are assessed for quality, safety, bias, and cost; agent workflows are assessed for goal completion, instruction adherence, tool usage accuracy, error recovery, and multi-step decision quality. Simulation-based testing validates agent behavior across realistic scenarios and personas before any change reaches production. All evaluation runs are repeatable, exportable, and suitable for compliance audits.

The Security and Governance layer is embedded directly into the platform rather than bolted on externally. The Enterprise Guardrails Framework scans both inputs and outputs in real time, enforcing organizational and regulatory standards. Specific scanners address prompt injection prevention, toxicity detection, bias detection, PII anonymization, ban-topic enforcement, and relevance alignment checks. All guardrail policies are configurable per use case. Role-Based Access Control (RBAC) enforces fine-grained permissions across the platform: who can build agents, who can approve deployments, who can view financial data, who can modify governance policies, and who can access what data. Version control maintains a complete history of every agent, model, and workflow change, enabling rollback and full traceability. Audit logs capture every key event: user actions, configuration changes, agent decisions, and policy enforcement outcomes, all with timestamps and user attribution.

Search and Data AI provides the knowledge layer that keeps agents grounded in accurate enterprise information. The platform offers 100+ pre-built search connectors spanning collaboration tools (Slack, Microsoft Teams, Outlook), productivity systems (Jira, Asana, ServiceNow, Confluence, SharePoint), CRM and sales tools (Salesforce, HubSpot, Zendesk), cloud storage (Google Drive, Box, OneDrive, S3, Azure Storage), code and IT operations (GitHub Enterprise, GitLab, PagerDuty, Datadog), and custom connectors for enterprise-specific systems. Retrieval uses a hybrid approach combining semantic vector search with keyword search, enhanced by a Knowledge Graph for structured reasoning and a configurable Agentic RAG pipeline where agents actively determine how, when, and from where to retrieve data. Teachability enables continuous improvement through built-in feedback loops, allowing agents to adapt based on user corrections and task outcomes over time.

The Agent Management Platform layer itself provides centralized lifecycle management across all agents in a project-based organization model. Agents built on Kore.ai and agents built on third-party systems are registered in a unified repository. Policy management applies consistent controls across every AI initiative. Observability surfaces real-time performance data. Evaluation runs pre-built and custom validation checks on AI systems before deployment. Role-based dashboards give builders, governance teams, and executive leadership appropriately scoped views of performance, cost, compliance status, and business outcome data. The metrics computation engine continuously processes raw telemetry into standardized performance, quality, reliability, governance, and business metrics. Dataset curation from production runs converts real telemetry into trusted evaluation datasets for regression testing and continuous improvement.

Integrations: Model-agnostic, cloud-agnostic, channel-agnostic

The platform operates on a fully agnostic integration architecture. Any model can be connected: commercial, open-source, fine-tuned, or custom. Any cloud can host deployments: AWS, Azure, Google Cloud, or private infrastructure and on-premise environments. Any communication channel can surface agent interactions: Slack, Microsoft Teams, voice, web, and mobile. Any enterprise data source can feed agent knowledge through the 100+ connector library. This architectural choice directly addresses the most common governance failure in enterprise AI: platforms that force organizations to rebuild their entire technology stack to achieve governance. Kore.ai's AMP provides governance across whatever technology stack already exists.

The competitive differentiation this creates against hyperscalers (AWS AgentCore, Microsoft Foundry) is significant: those platforms govern only agents built within their own ecosystems. Kore.ai governs everything, regardless of origin. Against pure-play governance platforms, the differentiation is depth: Kore.ai combines policy enforcement with financial intelligence, ROI attribution, and end-to-end workflow governance in a single platform rather than requiring additional integration work. Against open-source frameworks, the differentiation is enterprise readiness: governance, security, compliance, and operational controls are built in rather than self-assembled.

Gartner explicitly identifies Kore.ai as a named vendor in the emerging Agent Management Platform category, alongside the observation that AMP providers who combine governance with financial and operational intelligence will capture the coordination and execution layer that represents over 60% of AI's compounded value through 2030.

The architecture Kore.ai has built is precisely this: the centralized hub where governance, performance, and value converge.

Why enterprises must act now on AI agent governance: The cost of waiting

Enterprise history offers a reliable pattern: the organizations that invest in foundational management infrastructure during technology transitions, not after the crisis that makes investment unavoidable, capture advantages that compound for years. Cloud governance frameworks built before regulatory pressure enabled faster and more confident cloud adoption. Data governance frameworks implemented before GDPR enforcement turned compliance into a differentiator. The same principle applies directly to AI agent governance, and the Agent Management Platform category is where that governance infrastructure now lives.

The agent management moment follows the same logic on a compressed timeline. The organizations that implement Agent Management Platforms now, while their agent estates are still manageable, before regulatory scrutiny intensifies, while the institutional knowledge of their agent operations can be captured and systematized, will enter the next phase of the agentic era with a structural advantage that their competitors will spend years trying to replicate.

Gartner estimates that by 2027, organizations with mature AI governance frameworks will outperform peers on AI-related business outcomes by 40%, not because of the agents they build, but because of their ability to govern, optimize, and scale them.

The organizations that wait will find themselves managing the consequences of agent sprawl, unattributed spend, compliance gaps, and operational chaos at exactly the moment that competitive pressure demands acceleration, not consolidation. The remediation cost, in audit fees, regulatory penalties, re-engineering effort, and lost competitive ground, will dwarf the investment in governance infrastructure that could have been built proactively.

The question every organization deploying AI agents needs to answer honestly: are we building the infrastructure to manage this workforce, or are we assuming the problem will surface slowly enough to address later?

It won't. The agents are already running. The only choice remaining is whether they run governed.

"Every enterprise deploying agents will eventually hit the agent management wall. The only variable is whether they hit it with infrastructure in place, or without."

.webp)