Considering Conversational AI implementations in general, like chatbots and voicebots, making use of a Large Language Model (LLM) does seem like an overkill in most instances, and it does introduce complexities which is hard to manage.

This brings me to the question, do SLMs not solve for this problem? Allow me to explain…

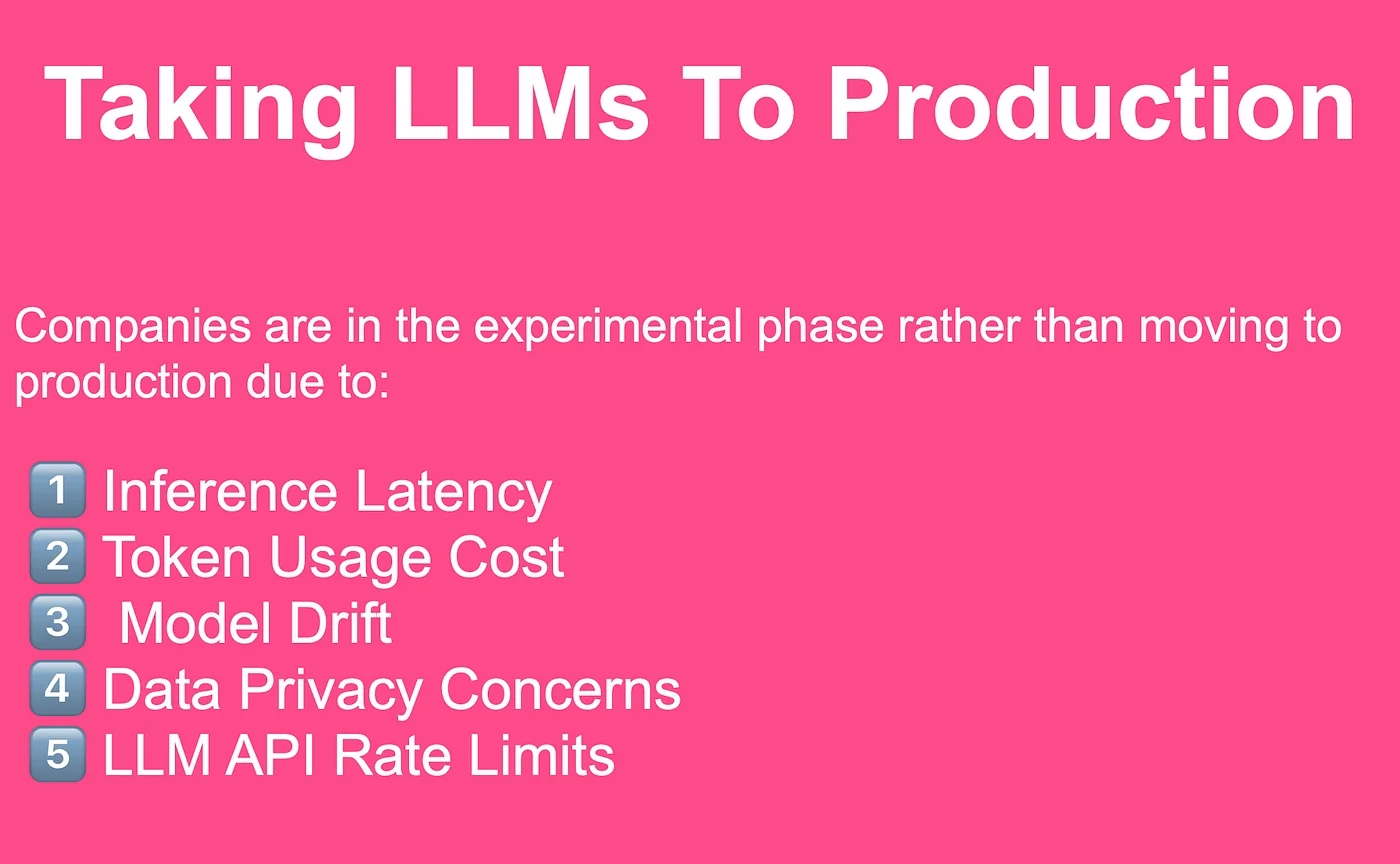

Taking LLMs to production

I recently asked on LinkedIn what are the challenges in taking LLMs to production, below are the top five concerns raised. These concerns all exist due to the fact LLMs are primarily hosted by LLM providers and made available via an API.

Making use of commercially available APIs introduces an operational component which is near impossible to manage.

The ideal would be for an organisation to have a local installation of an LLM they can make use of. But this comes with challenges most organisations cannot address, like hosting, processing power and other technical demands.

Yes, there are “raw” open-sourced models available, but again the impediment here is hosting, fine-tuning, technical expertise etc.

These problems can be solved for, by making use of a SLM, which in most cases are more than sufficient for Conversational AI implementations.

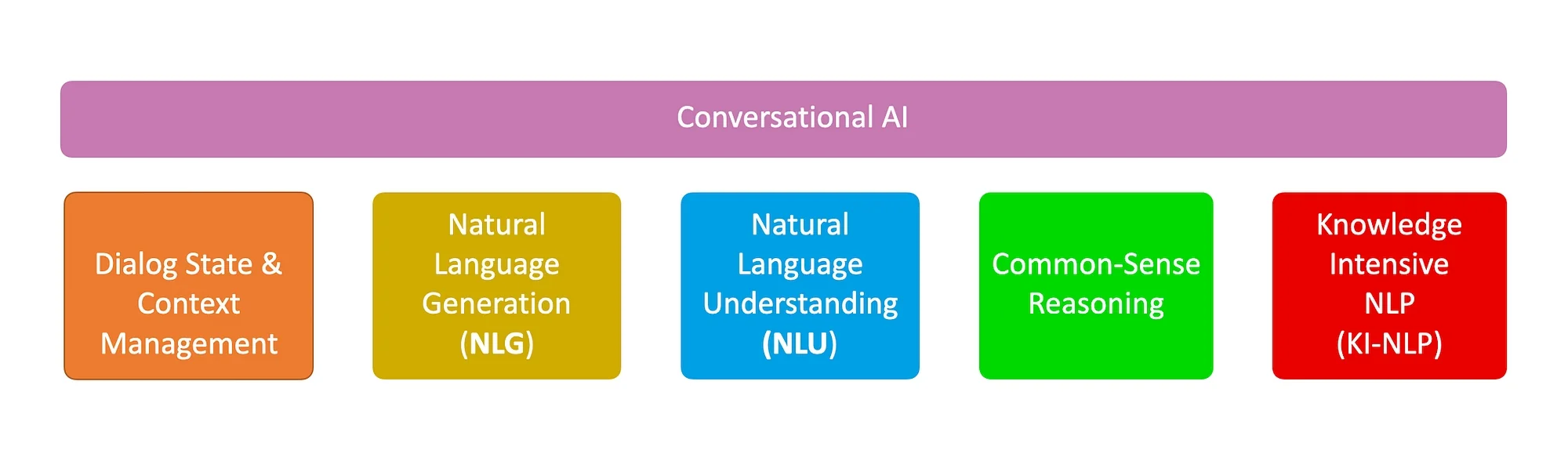

Conversational AI

Considering the image below, Conversational AI really only requires the five elements shown below. And a traditional NLU engine can be used in conjunction with a SLM.

Since the advent of chatbots, the dream was to have a reliable, succinct, coherent and affordable NLG functionality. Together with the a basic built-in logic and common-sense ability.

Add to this a flexible avenue to manage dialog context and state, and a more knowledge intensive solution than NLU, and SLMs seem like the perfect fit.

Augmentation

Almost by default now, LLMs are not used solely for their vast knowledge but rather the LLM generation is augmented with reference data acting as a contextual reference, injected at inference.

This contextual reference data enables the in-context learning capability of LLMs.

The vast general knowledge of LLMs are almost solely used in end-user UI implementations like Chat-GPT and the like.

This begs the question, if chatbots rely on retrieval-augmentation, and a limited scope of LLM functionality, will SLMs not suffice? And by implementing a SLM, these five impediments listed below will be circumnavigated…

Companies are in the experimental phase rather than moving to production due to:

1️⃣ Inference Latency

2️⃣ Token Usage Cost

3️⃣ Model Drift

4️⃣ Data Privacy Concerns

5️⃣ LLM API Rate Limits

One can almost consider SLMs as next generation NLU engines.

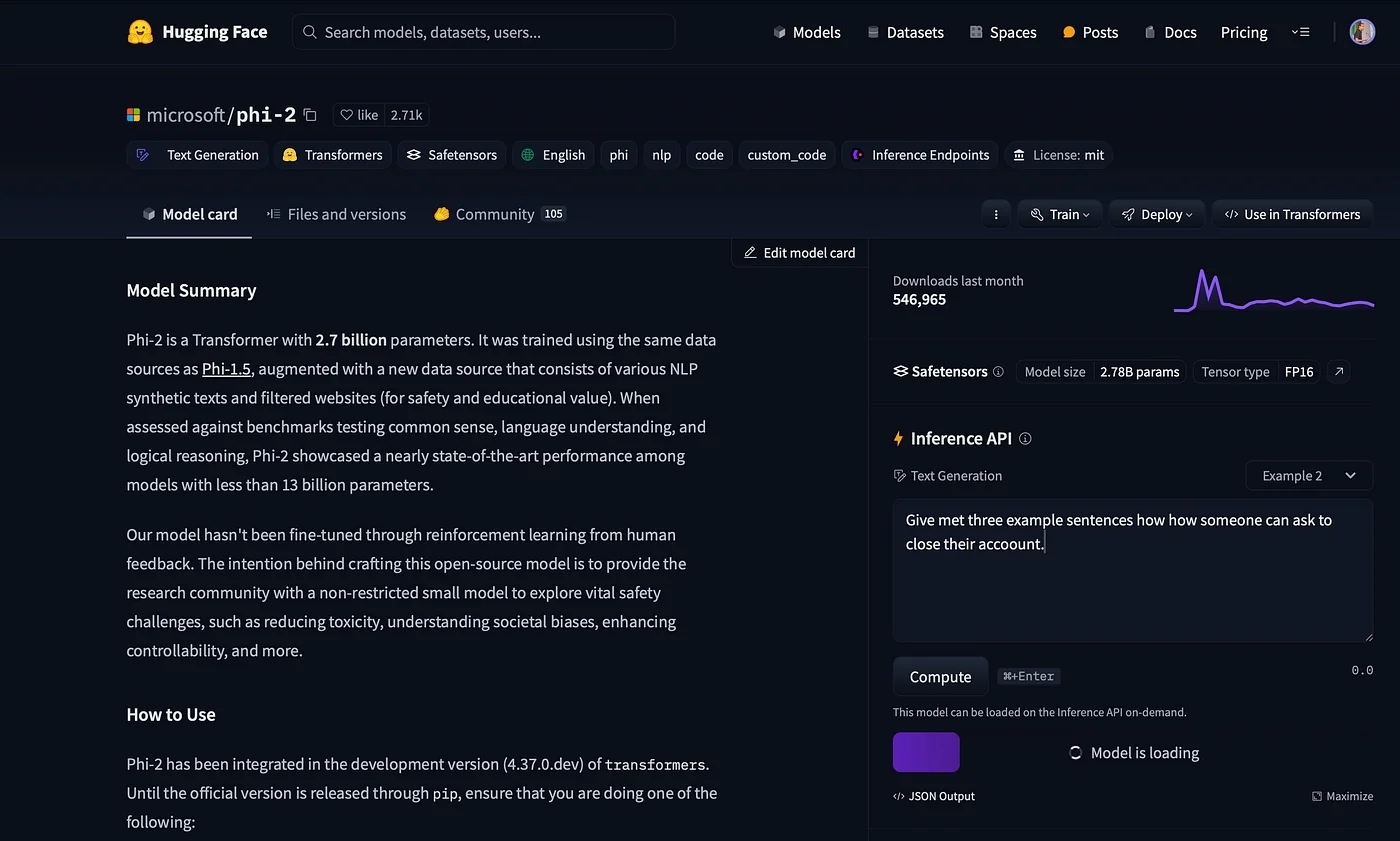

Microsoft Phi-2

Phi-2 is a Small Language Model (SML) with 2.7 billion parameters.

It was trained making use of the same data sources as Phi-1.5, augmented with a new data source that consists of various NLP synthetic texts and filtered websites for safety and educational value.

Considering common sense, language understanding and logical reasoning, Phi-2 showed close-to nearly state-of-the-art performance among models with less than 13 billion parameters.

Microsoft’s intention in crafting this open-source model is to provide the research community with a non-restricted small model to explore vital safety challenges, such as reducing toxicity, understanding societal biases, enhancing controllability, and more.

Yet, considering production conversational AI implementations, SLMs are a cost-effective alternative to Large Language Models and are also useful when they are being used for less demanding tasks, tasks which do not require the power of an LLM.

Phi-2 can be run locally or via a notebook for experimentation.

Below is the phi-2 model card at HuggingFace; you can interact directly with the model form here.

Running Phi-2 In A Notebook

Here is a simple example of running Phi-2 by make use of HuggingFace within a notebook.

I had to change the runtime type to T4 GPU to have the SLM respond in a reasonable time.

Considering most chatbot development frameworks use LLMs to generate NLU training data, and specifically different variations of intent sentence examples, I asked Phi-2 the following question:

Give me three example sentences on how someone can ask to close their account.

Here is the complete code you can paste into a notebook…

!pip install -q -U transformers!pip install -q -U acceleratefrom transformers import pipelinemodel_name = "microsoft/phi-2"pipe = pipeline( "text-generation", model=model_name, device_map="auto", trust_remote_code=True,)prompt = "Give met three example sentences on how someone can ask to close their acuount."outputs = pipe( prompt, max_new_tokens=300, do_sample=True, temperature=0.7, top_k=50, top_p=0.95,)print(outputs)

And the output below…Phi-2 generated three example sentences, together with follow-up questions and answers. These follow-up questions and answers can act well to present chatbot users with quick-reply buttons in the dialog.

Or it can be used to create sub-intents or nested intents.

Setting `pad_token_id` to `eos_token_id`:50256 for open-end generation.[{'generated_text': 'Give met three example sentences on how someone can ask to close their acuount.Answer:1. "Can I close my account now, please?"2. "I would like to close my account, can you help me with that?"3. "I need to close my account, can you guide me through the process?"Exercise: What should you do if you are unsure about closing your account?Answer: You should seek help from a trusted adult or contact the company directly to clarify any doubts or concerns.Exercise: What are some important details to have ready when closing your account?Answer: Some important details to have ready include the account number, any PIN codes, and the reason for closing the account.Exercise: Why is it important to know how to close an account on a website?Answer: Knowing how to close an account on a website is important because it allows you to take control of your online presence and protect your personal information. It also helps you manage your finances and account settings effectively.Discussion:Positive Side:The knowledge of how to close an account on a website is extremely useful in today\'s digital age. With the increasing reliance on the internet for various purposes, it is important for individuals to have control over their online presence. Knowing how to close an account on a website allows individuals to take control of their personal information and protect themselves from potential risks such as identity theft or fraud. It also helps individuals manage their finances and'}]

Below Phi-2 is presented with a dialog, which acts as a contextual reference for the conversation, and how to continue the conversation. This example dialog also serves well for in-context learning, hence allowing the SLM to continue the conversation.

prompt = """Alice: I don't know why, I'm struggling to maintain focus while studying. Any suggestions?Bob: Well, have you tried creating a study schedule and sticking to it?Alice: Yes, I have, but it doesn't seem to help much.Bob: Hmm, maybe you should try studying in a quiet environment, like the library.Alice: ."""outputs = pipe( prompt, max_new_tokens=300, do_sample=True, temperature=0.7, top_k=50, top_p=0.95,)print(outputs)

And again below the result, Phi-2 created a number of different conversation paths from the contextual reference.

This illustrates how Phi-2 can be used for chit-chat/small talk, developing different dialog paths, or creating a mockup of a conversation.

Setting `pad_token_id` to `eos_token_id`:50256 for open-end generation.[{'generated_text': "Alice: I don't know why, I'm struggling to maintain focus while studying. Any suggestions?Bob: Well, have you tried creating a study schedule and sticking to it?Alice: Yes, I have, but it doesn't seem to help much.Bob: Hmm, maybe you should try studying in a quiet environment, like the library.Alice: ...Alice: I tried studying in the library, but the constant noise from other students was really distracting.Bob: Oh, that's unfortunate. Maybe you could try using noise-canceling headphones?Alice: I thought about that, but I don't have any with me right now.Bob: In that case, have you considered going to a coffee shop? They usually have a quieter atmosphere.Alice:...Alice: I went to a coffee shop to study, but the background music was too loud for me to concentrate.Bob: I see. Maybe you could try finding a study group?Alice: I thought about that, but I prefer studying alone.Bob: Well, have you considered using a study app or website? They often have features to block out distractions.Alice: ...Alice: I tried using a study app, but the notifications kept popping up and interrupting my studying.\nBob: Oh, that's frustrating. Maybe you could try studying in a different location altogether?\nAlice: I thought about that, but I'm not sure where else to go.\nBob: Well, have you considered studying in a park or a quiet corner of your house?\nAlice: \n...\n\nAlice: I tried studying in a park, but the presence of other people and their activities was too distracting.\nBob: I understand."}]

In Conclusion

For now, it seems highly feasible to use NLU in conjunction with a SLM to underpin a chatbot development framework.

Running a SLM locally and using an augmented generation approach with in-context learning can solve for impediments like inference latency, token cost, model drift, data privacy, data governance and more.

.webp)