A study introduced a novel approach to RAG but more importantly the study highlighted a number of shortcomings of RAG as we know it. A number of shortcomings of traditional RAG were highlighted in the study. The strategies used for deciding when to make use of RAG as apposed to other methods often rely on a set of static rules. The conversational UI usually has a number of parameters which triggers a call to the RAG implementation. The strategies for deciding what to retrieve typically limit themselves to the LLM’s most recent sentence or the last few tokens. With the lookup trigger not being optimised, unnecessary retrievals take place. These unnecessary and unoptimized retrievals can add unwanted noise, where the retrieved data is not optimised. The overhead in text adds additional cost and inference wait time, potentially timeouts. RAG does not necessarily maintain and take into consideration the complete contextual span of the conversation.

Dynamic RAG

Dynamic Retrieval Augmented Generation actively decides when and what to retrieve during the text generation process.

The two key elements of Dynamic RAG are:

- Identifying the optimal moment to activate the retrieval module (when to retrieve) and

- Crafting the appropriate query once retrieval is triggered (what to retrieve)

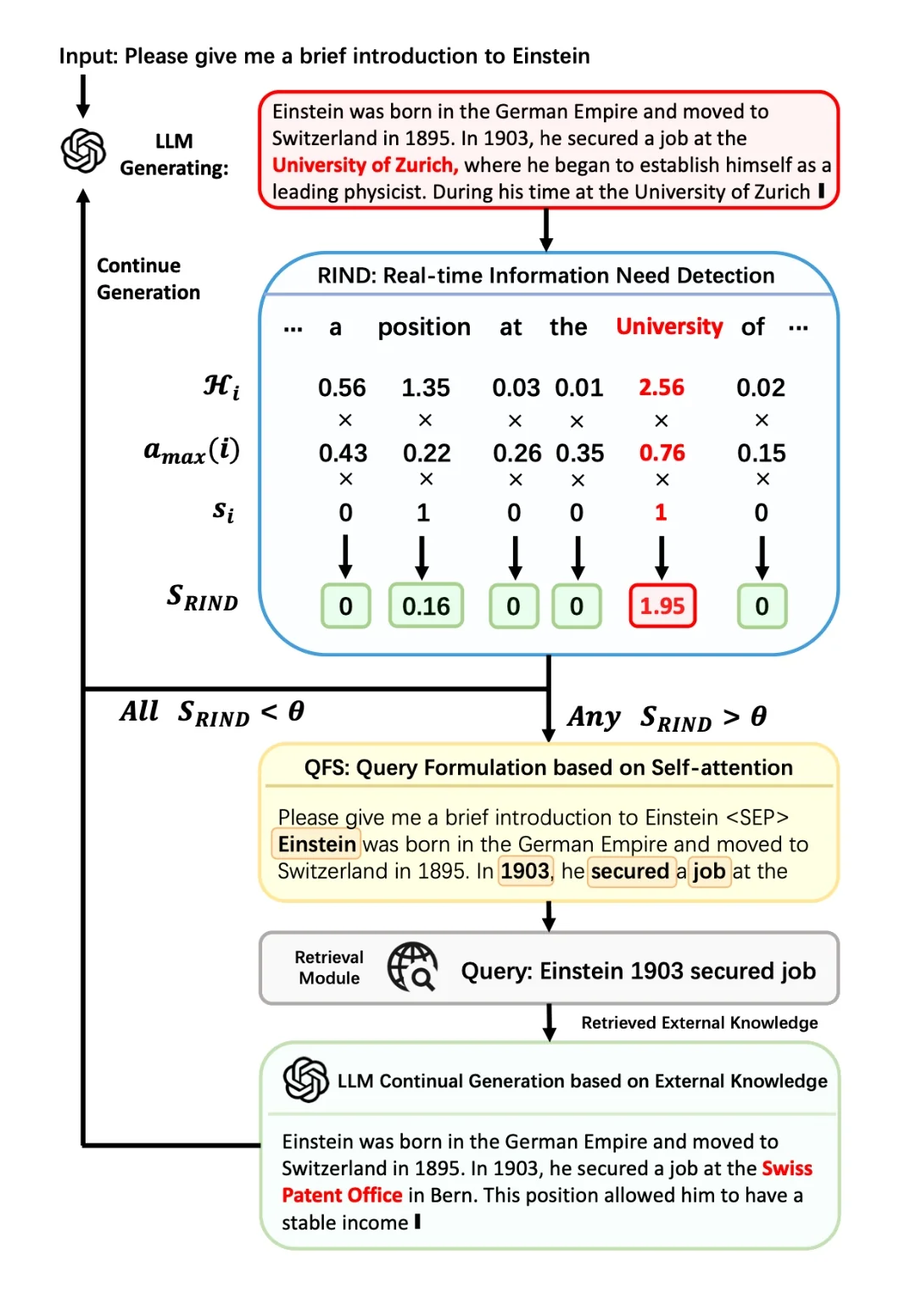

The proposed framework consists of two components, RIND and QFS.

RIND

Real-time Information Needs Detection which takes into consideration:

- The LLM’s uncertainty about its own generated content,

- The importance of each token, and the semantic significance of each token.

QFS

For the formulation of retrieval queries, a framework QFS is created: Query Formulation based on Self-Attention. QFS reimagines query formulation by leveraging the LLM’s self-attention across the entire context.

DRAGIN

The framework is specifically designed to make decisions on when and what to retrieve, based on the LLM’s real-time information needs during the text generation process. DRAGIN is described as a lightweight RAG framework that can be incorporated into any Transformer-based LLMs without further training, fine-tuning, or prompt engineering.

Single-Round Retrieval-Augmented LLM

Language models (LLMs) have proven highly effective across various tasks. Nevertheless, their internal knowledge is often insufficient for tasks demanding extensive knowledge. To tackle this issue, Retrieval-Augmented Generation (RAG) strategies are frequently used to boost LLM performance. A straightforward approach involves single-round retrieval augmentation, which is the most common approach.

Multi-Round Retrieval-Augmented LLM

While single-round retrieval suffices for straightforward tasks or instances with clear user information needs, it falls short for complex tasks like long-form question answering, open-domain summarisation, and chain-of-thought reasoning. Relying solely on the user’s initial input for retrieval may fail to encompass all the external knowledge necessary for the model’s requirements. Consequently, researchers have initiated investigations into multi-round retrieval augmentation.

Conclusion

As I have mentioned before, complexity is being added to LLM integrations and applications. Added to this, a multi-round querying approach is being taken. This adds additional cost, latency and dependancy on one or more LLMs; which should encourage enterprises to use open-sourced, locally hosted LLMs. With the increase in RAG complexity, consideration should be given to RAG Agents, or as LlamaIndex refers to it, Agentic RAG.

.webp)