More LLM calls enhance performance on easy queries but diminish it on hard ones.

So what scaling laws can be discovered & leveraged? What is the optimal number of LLM Calls?

Considering recent studies where LLM interactions are structured involving complex prompting strategies and some kind of solution is built around LLM interactions, two phenomenons are taking place:

- There are more LLM calls involved in the Generative AI approach or strategy. I have always maintained that the approach of offloading most if not all of the functionality to LLMs implies more calls. This gives rise to additional overhead and problems like inference time, cost and dependancy on a specific LLM.

- In many of the instances a number of calls are made to the LLM and one or more LLMs is used to select the best response to use, or present to the user.

These approaches are aimed at leveraging LLMs to the maximum and avoiding efforts in the area of fine-tuning models, introducing any human-in-the-loop process or external data.

Understanding these scaling dynamics is also helpful to understand the limits of compound inference strategies.

However, more LLM calls enhance performance on easy queries but diminish it on hard ones.

Reaching consensus

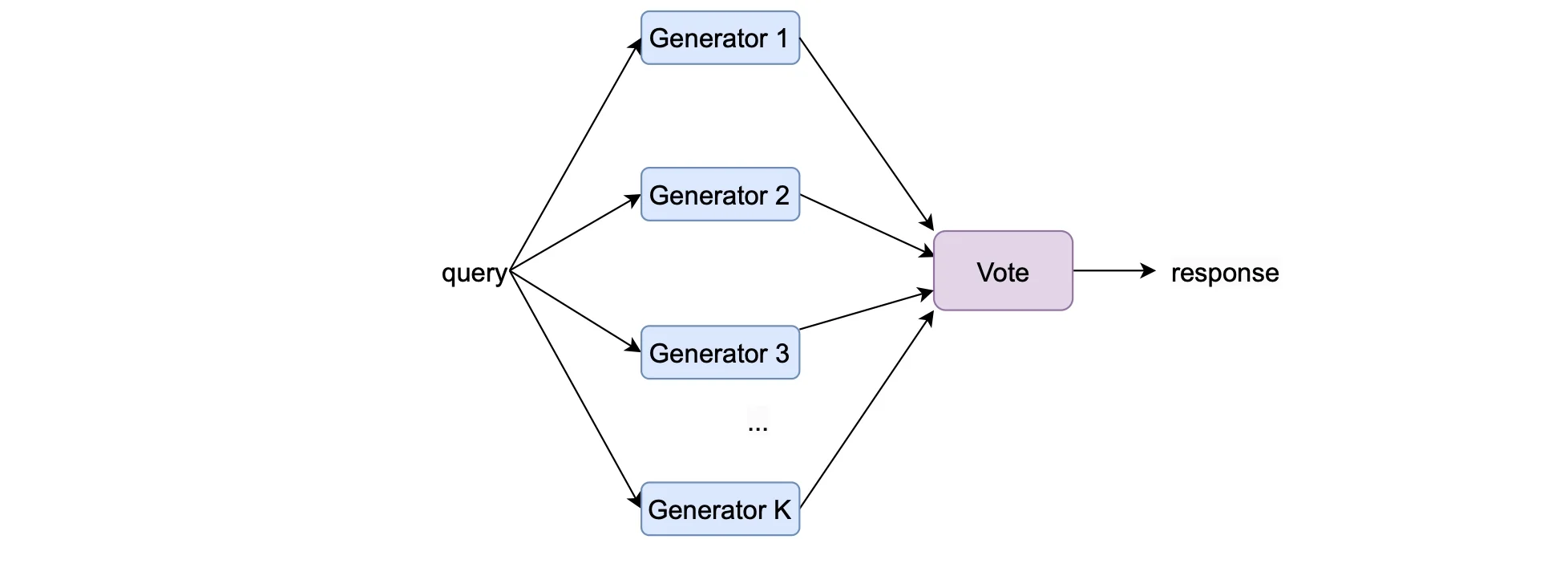

This paper investigates the impact of the number of Large Language Model (LLM) calls on the performance of compound systems used in language tasks.

These compound systems aggregate responses from multiple LLM calls.

The research examines this theoretically and empirically to understand the scaling laws governing the performance of such systems.

The research shows that, contrary to expectations, the performance of Voting Inference Systems initially increases and then decreases with the number of LLM calls across various language tasks.

Considering the image below, this speaks to what I described in the introduction. Where multiple instances of inference is run, and a pre-defined process is used to select the best response to use.

More LLM calls enhance performance on easy queries but diminish it on hard ones.

By understanding this, the study devises a method to compute the optimal number of LLM calls to maximise system performance, based on a small number of samples.

Experiments validate that this scaling law accurately predicts the performance of Voting Inference Systems and identifies the optimal number of LLM calls.

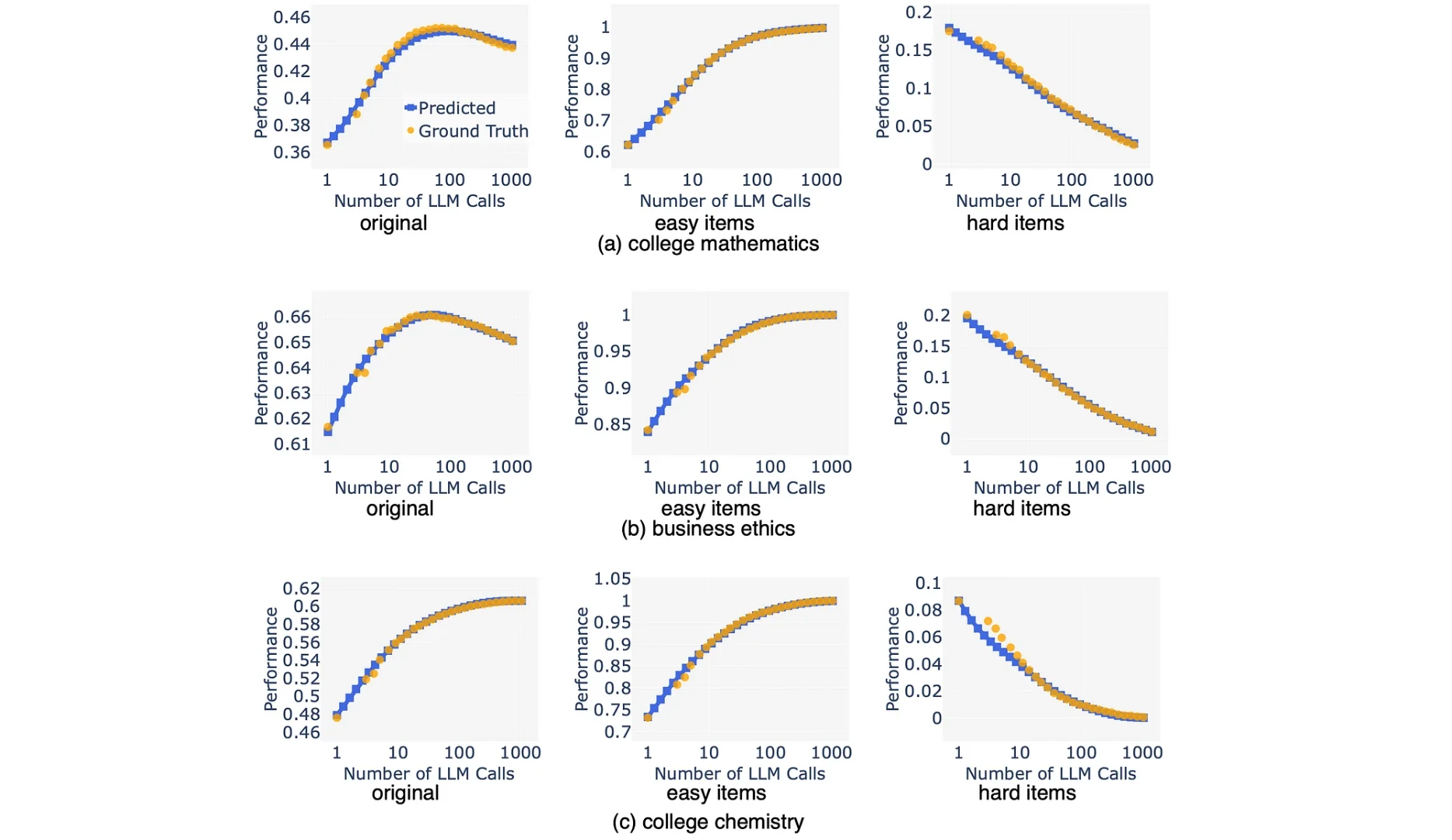

Considering the image above, as the number of LLM calls increases, the one-layer Voting Inference System exhibits a notable performance trend: it improves significantly on easy items while simultaneously deteriorating on hard items.

Specifically, while the system benefits from increased LLM calls in addressing easier questions more effectively, it struggles to maintain performance on the more challenging items, resulting in an overall nuanced performance pattern.

Considering the graphs above…the analysis of the Inference System’s performance on real-world datasets reveals an intriguing pattern. The empirical performance, represented by orange dots averaged over 1000 runs, demonstrates that increasing the number of calls doesn’t consistently improve performance.

This discrepancy arises due to contrasting effects on easy and hard queries within the system. The predictive capability of the defined scaling law, depicted by the blue lines, accurately anticipates performance trends.

Specifically, it enables optimal ensemble size selection without the need for exhaustive search across all possible call numbers. This underscores the effectiveness of the study’s analytical model in guiding system design and enhancing performance in practical applications.

Conclusion

The study’s findings underscore a crucial point: simply increasing the number of LLM calls doesn’t always lead to improved performance.

Instead, it emphasises the significance of thoughtful design in compound systems. By revealing the nuanced effects of LLM call quantities on system performance, the research highlights the importance of careful consideration in constructing AI systems.

The hope is that these insights will inspire further investigation and innovation in the development of maximally effective AI systems.

This article was originally published on Medium.

.webp)