Teaching Small Language Models to Self-Correct & Reason by using creative data formats for fine-tuning data. Via Prompt Erasure & Partial Answer Masking.

Small Language Models typically lack self-awareness & tend to exhibit greater confidence in their generated responses. Following an approach of Prompt Erasure & Partial Answer Masking (PAM) drastically improves the quality of SLM responses.

Introduction

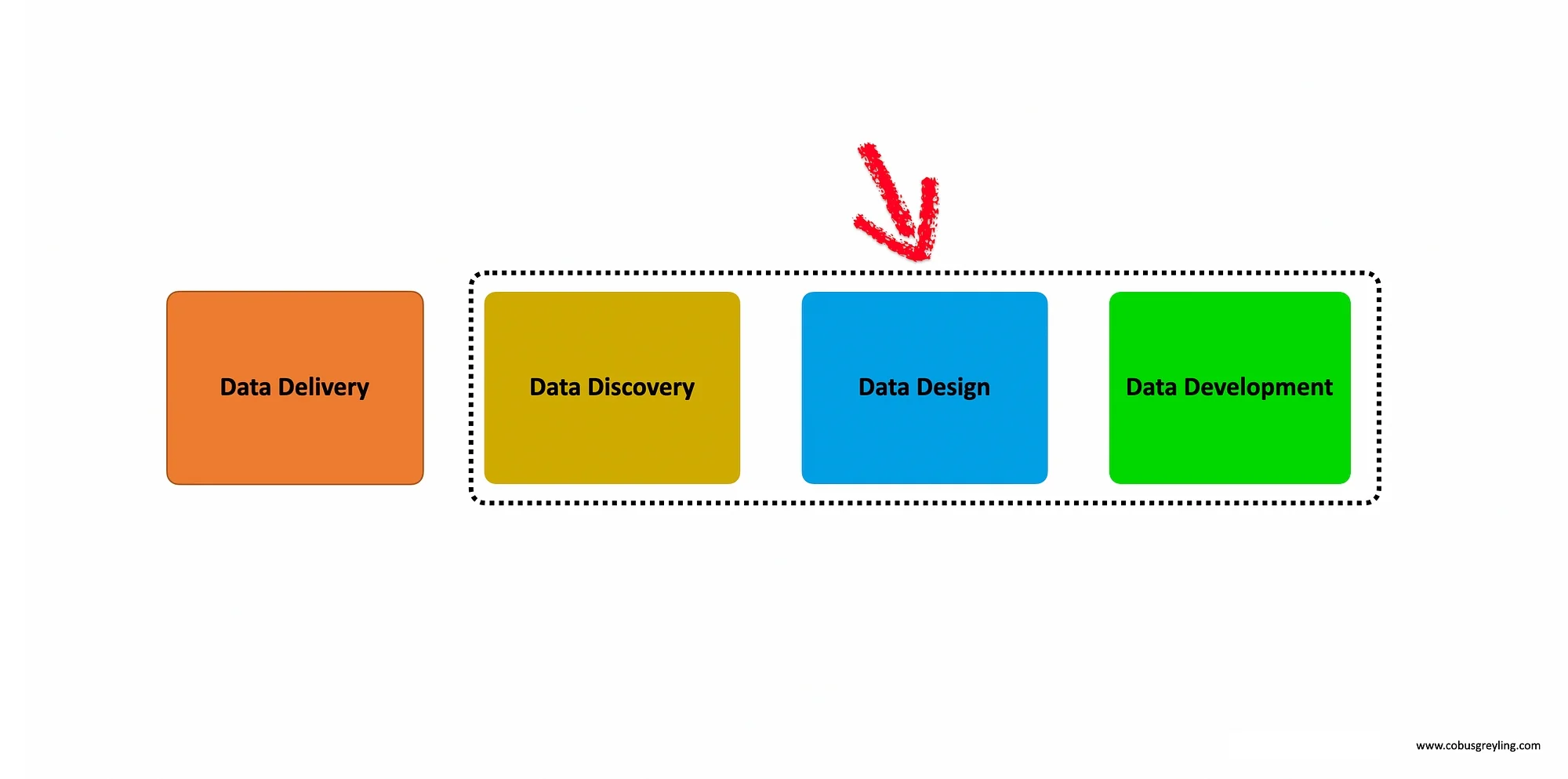

It seems that during the recent past, when it comes to Language Models (both LLMs and SLMs) the focus has been on the data delivery portion. In other words, how to bring proprietary data to the language model at inference.

The process of data delivery can be divided between two main approaches, gradient and non-gradient approach. Non-gradient approaches have received much focus due to it being transparent and not as opaque as gradient/fine-tuning approaches.

By far the most popular non-gradient approach to data delivery has been RAG, in all its variations.

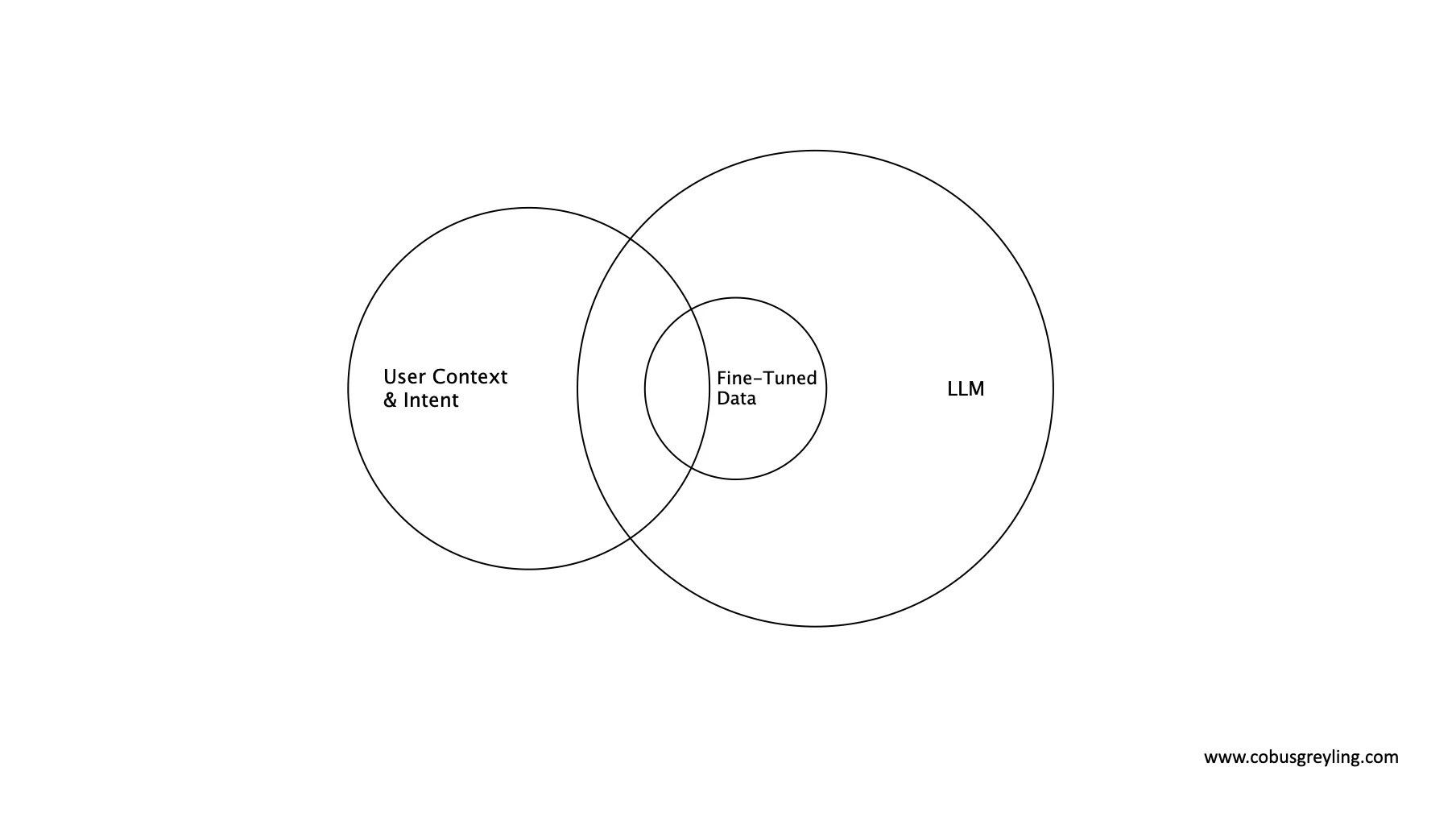

Something I find interesting is that some of the fine-tuning / gradient approaches do not have as its primary aim to imbue the language model with enterprise or domain specific data. But to rather change the behaviour of the model and teach the model, via the structure of the fine-tuning data, specific tasks. These tasks include features like reasoning and self-correction.

Data design

The focus is shifting from data delivery to data design, where the data format is designed in such a way, to imbue the model with specific behavioural abilities.

Reasoning

The primary focus from Microsoft Research in training Orca-2, was to create an open-sourced Small Language Model (SLM) which excels at reasoning. This is achieved by decomposing a problem and solving it step-by-step, which adds to observability and explainability.

In order to achieve this, nuanced training data had to be created, an LLM is presented with intricate prompts which is designed with the intention to elicit strategic reasoning patterns which should yield more accurate results.

Also, during the training phase, the smaller model is exposed to the task and the subsequent output from the LLM. The output data of the LLM defines how the LLM went about in solving the problem.

But here is the catch, the original prompt is not shown to the SLM.

But here is the catch, the original prompt is not shown to the SLM. This approach of Prompt Erasure, is a technique which turns Orca-2 into a Cautious Reasoner because it learns not only how to execute specific reasoning steps, but to strategize at a higher level on how to approach a particular task.

Rather than naively imitating powerful LLMs, the LLM is used as a reservoir of behaviours from which a judicious selection is made for the approach for the task at hand.

Self-correction

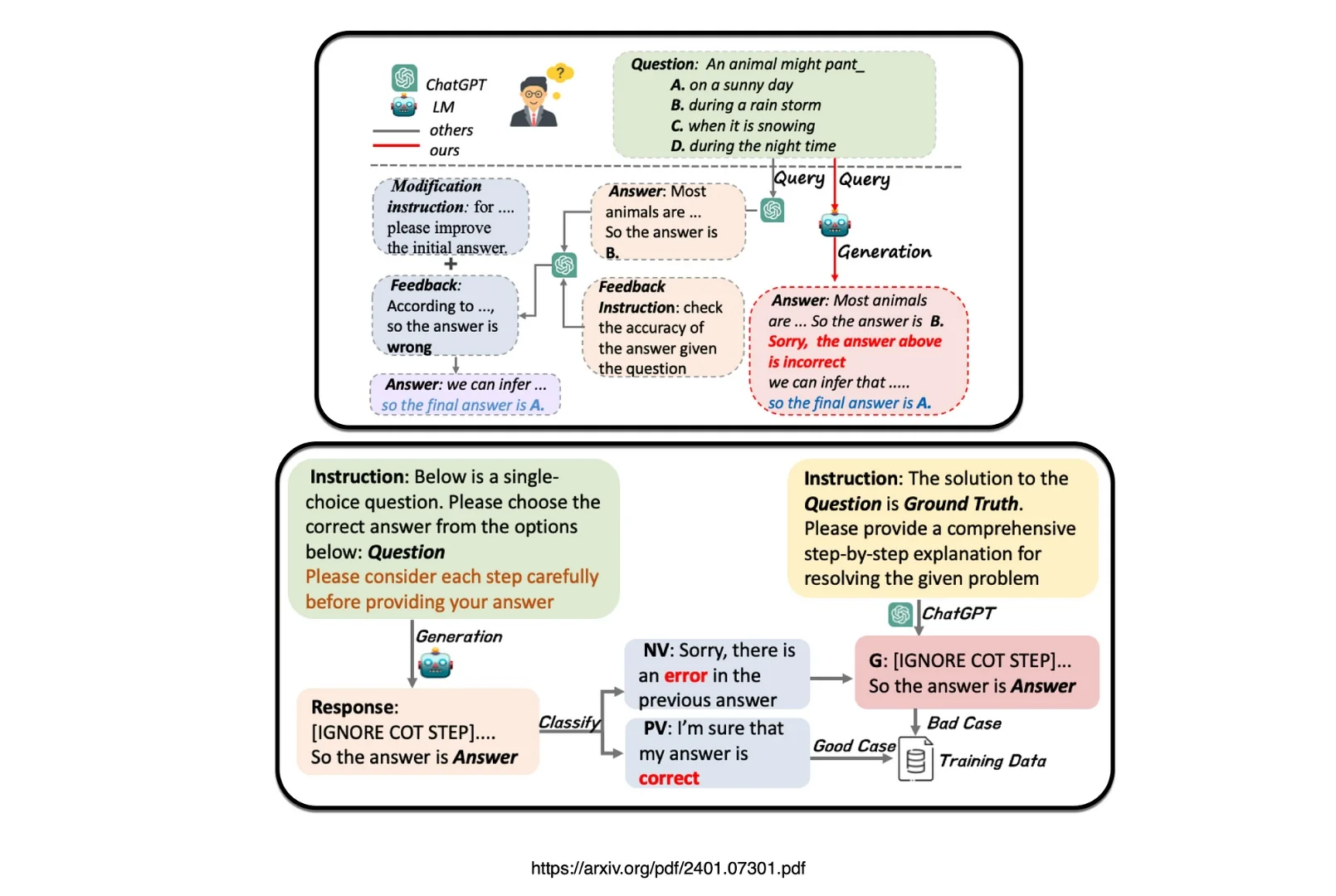

A recent study presented a pipeline for constructing self-correction training data by proposing an approach called Partial Answer Masking (PAM), aiming to equip the model with the capability of intrinsic self-correction through fine-tuning.

The goal of Partial Answer Masking is to instruct the language model to self-correct.

Answer masking

This study experiments by using Language Models ranging in parameter sizes from 6 billion to 13 billion across two tasks.

Introducing a means to enhance the self-correcting ability of small language models, the study presents Intrinsic Self-Correction (ISC), a mechanism relying on two fundamental capacities: self-verification and self-modification.

During the fine-tuning phase, the process introduce Partial Answer Masking (PAM) to imbue the model with self-verification capabilities.

The result is the first demonstration that even small language models with as few as 6 billion parameters possess inherent self-correction abilities during response generation, independent of ground truth reliance.

The proposed Intrinsic Self-Correction endeavours to embed self-correction as an innate pattern within Language Models. It entails an autonomous and spontaneous self-correction process, distinct from existing prompt engineering methods.

To equip Small Language Models with self-correction capabilities, a pipeline is devised for constructing self-correction data and establish the data format, which can be universally applied to generate data for self-correction tasks.

Conclusion

These two studies addresses an approach where nuanced training data is created to imbue small language models (SLMs) with specific skills in reasoning and self-correction.

Fine-tuning is normally associated with adding knowledge to a model, augmenting the knowledge of the model. However, this specific approach of data design focusses on fine-tuning which enhanced the capabilities and behaviour of the language model, as opposed to adding knowledge for retrieval.

I’m quite sure, there must be more examples of creative approaches to designing training data to update model behaviour.

.webp)