Can Today’s AI Agents Survive Their Own Runtime?

Cobus Greyling

May 15, 2026

The smartest models fail in production…the infrastructure around them matters more than the intelligence inside them.

The models have never been better…

Claude reasons through multi-step problems.

Handling complex tool chains.

Processes vast context windows…

And yet, the most common failure mode for AI agents in production has nothing to do with the model.

It has everything to do with what happens around it.

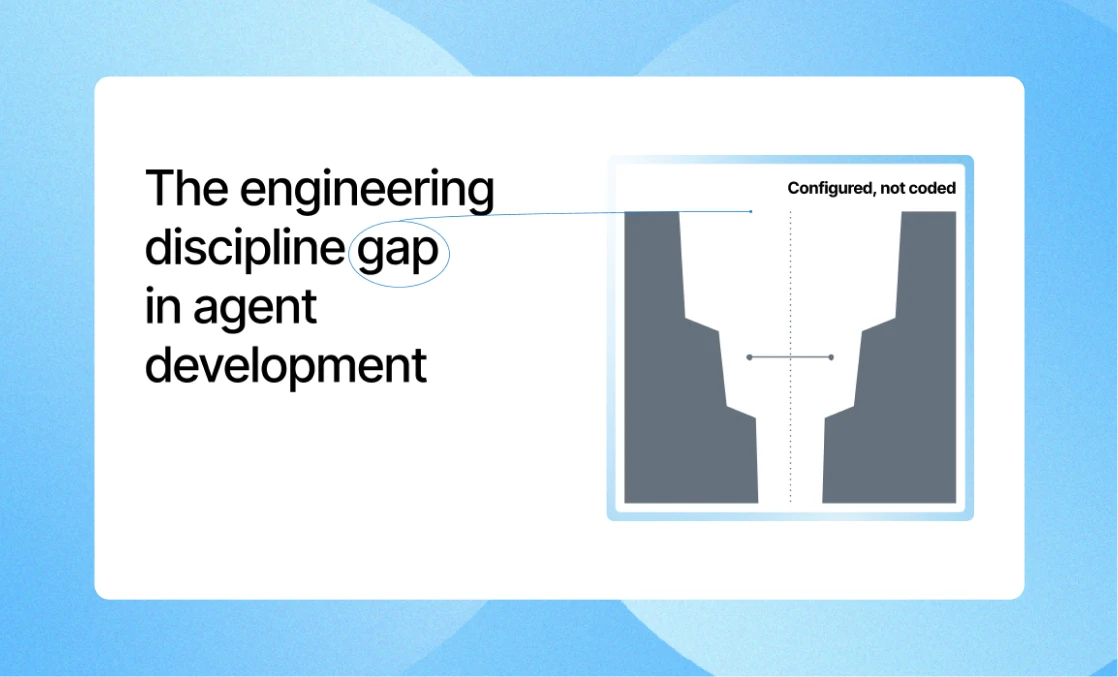

The gap

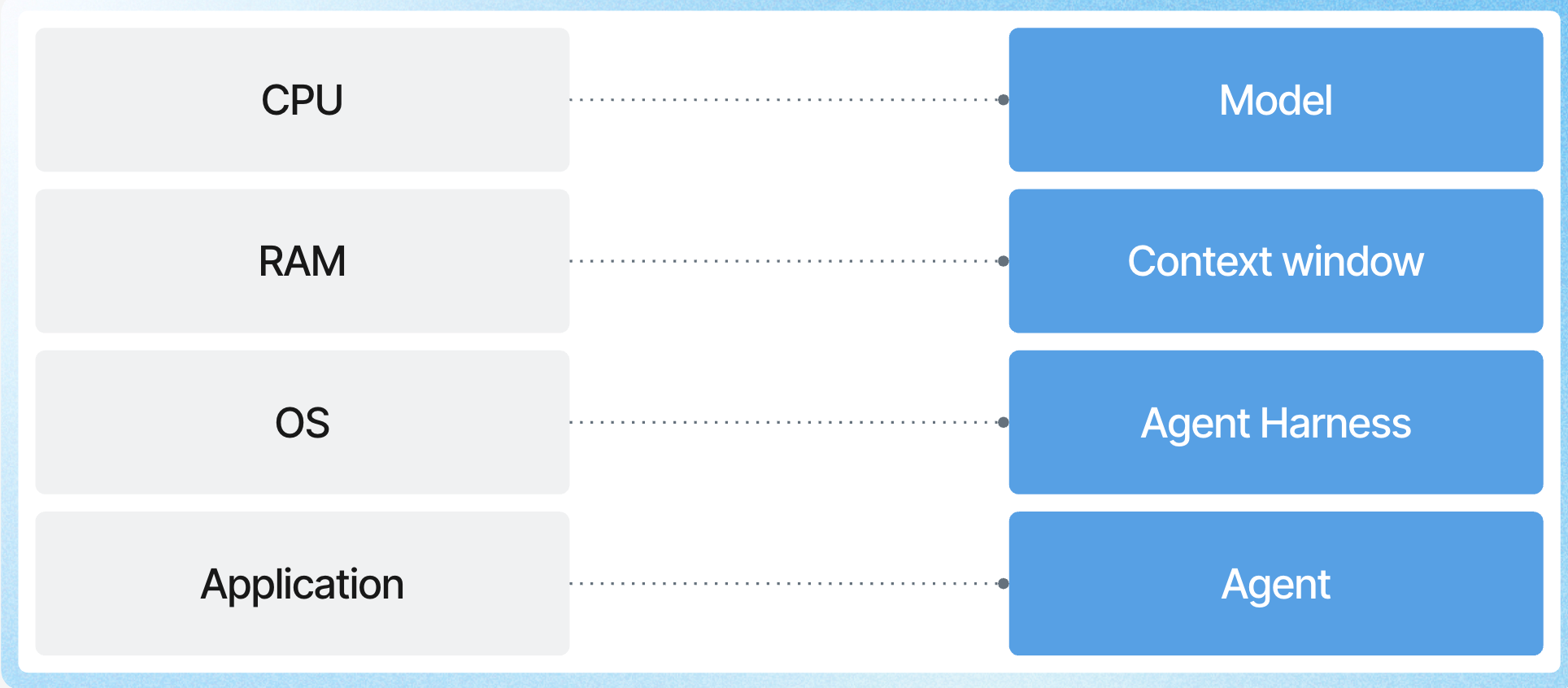

There’s a shared realisation settling over the enterprise AI space..the distance between a demo agent and a production agent isn’t measured in model benchmarks.

It’s measured in infrastructure performance…the harness.

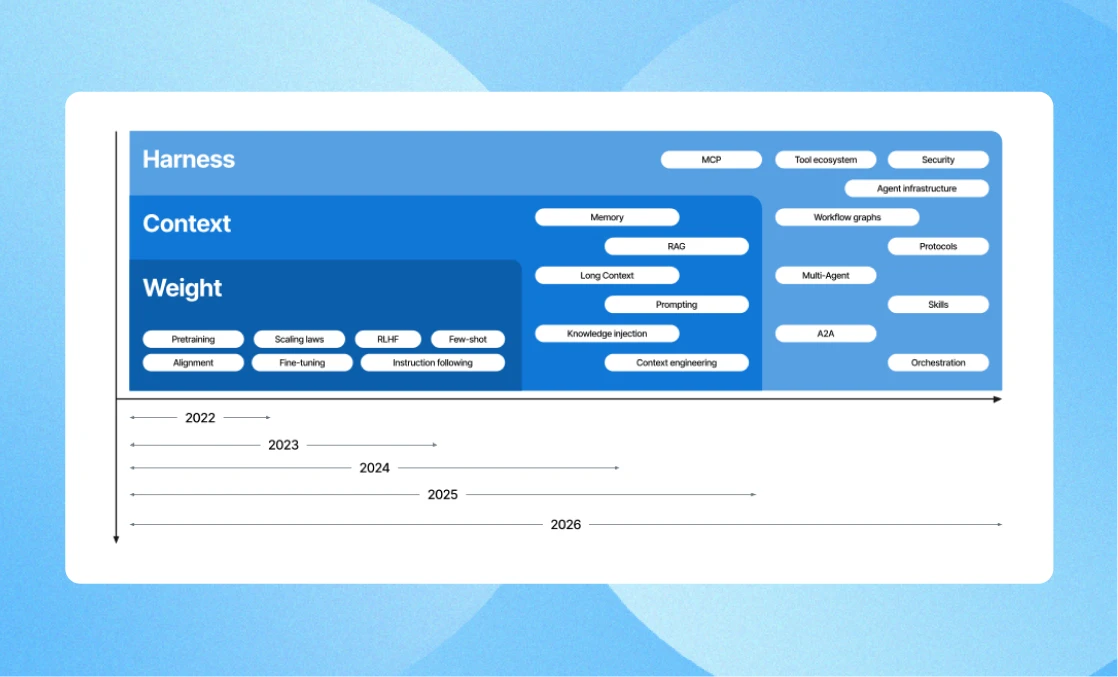

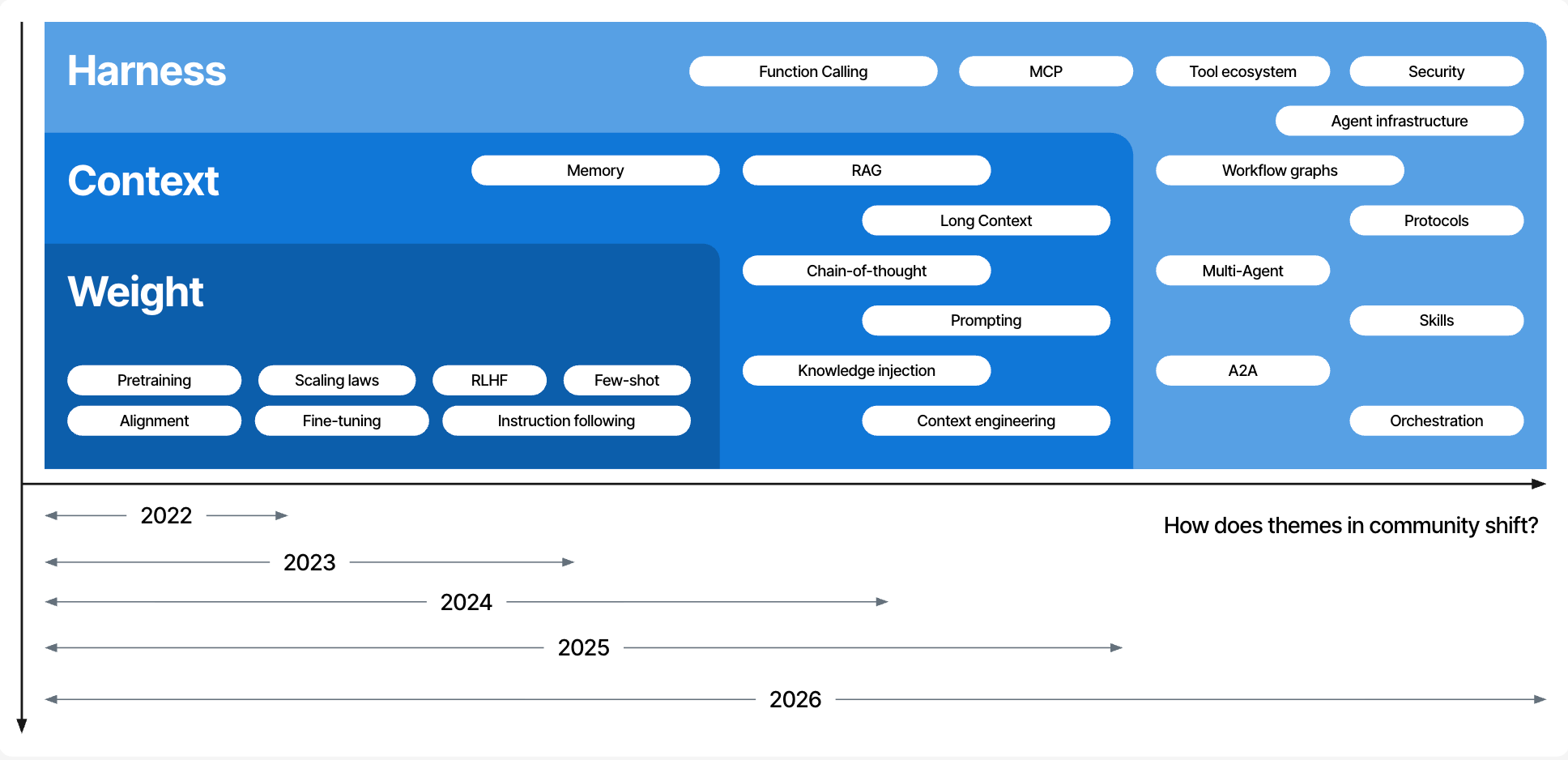

I wrote about this shift in over the past 24 months, the conversation has moved from what models can do to what systems must provide.

Session state, multi-turn memory, tenant isolation, compliance enforcement and more.

This is the difference between a compelling prototype and something a regulated enterprise can actually deploy.

The agent itself is often the easy part.

The runtime is where the challenges are.

The runtime

The market is quietly splitting into two camps…

- Teams building agent infrastructure from scratch, and

- Teams building agent logic on platforms that handle the infrastructure.

The first camp ships slower, spends 80% of engineering effort on plumbing and rebuilds the same session management and orchestration primitives that every other team is also rebuilding.

The second camp writes 50–200 lines of agent behaviour and lets the platform provide the other 15,000 lines of distributed runtime, compliance, and operational infrastructure.

The agents that will matter in production, the ones handling real enterprise workflows, coordinating across systems, operating within regulatory constraints…won’t be the ones with the best model.

They’ll be the ones with a runtime that can actually survive contact with the real world.

The question isn’t whether your agent can reason. It’s whether its runtime can keep up.

The harness is the product

This is something I’ve been circling for a while.

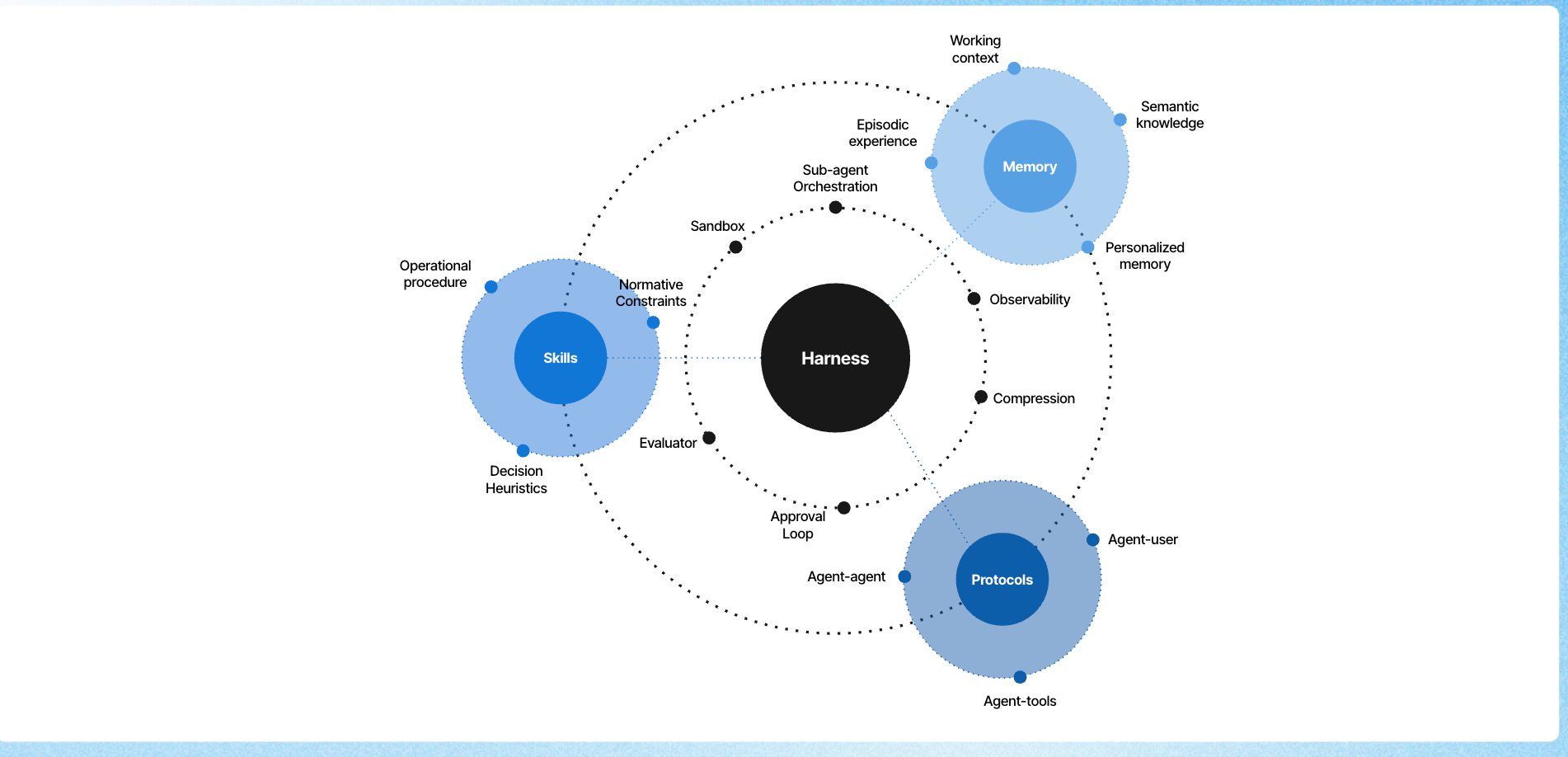

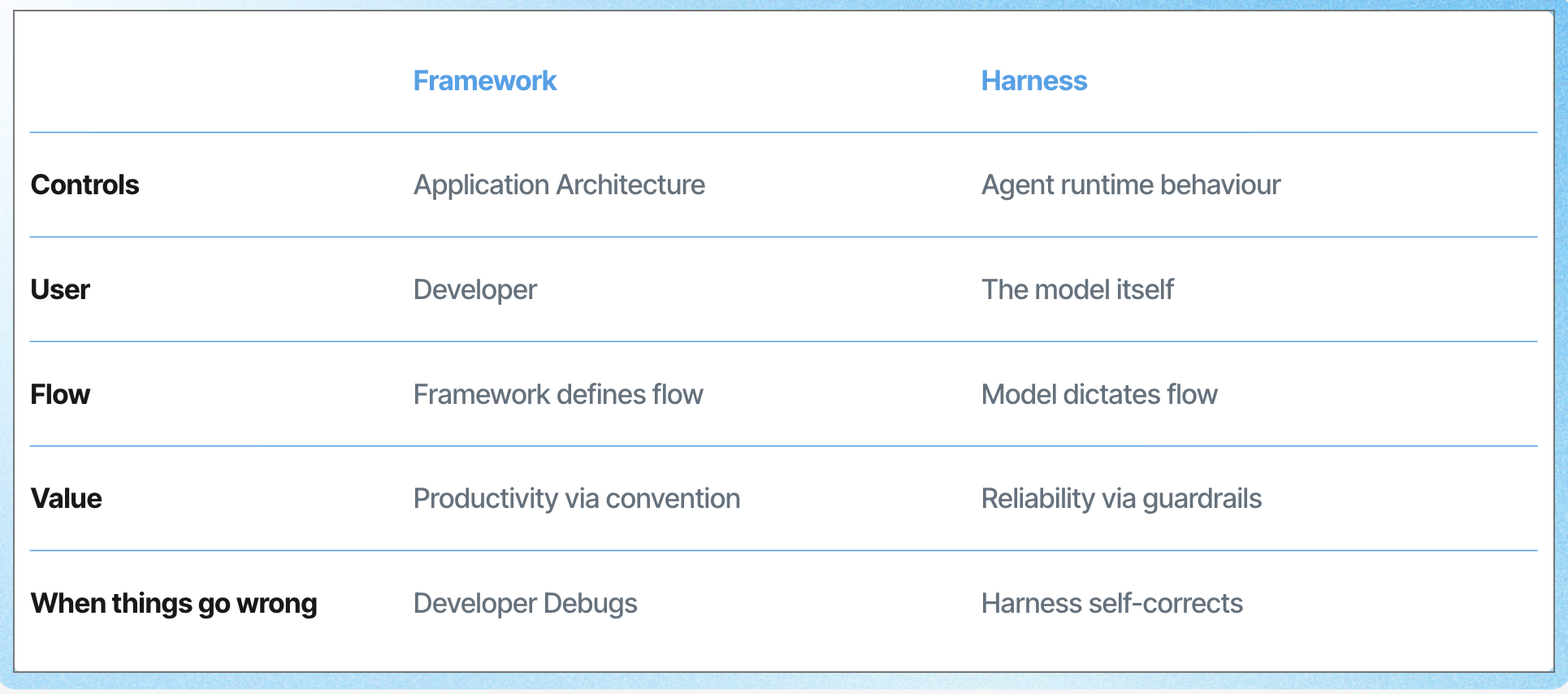

Previously I argued that the harness … the system that reads context, manages tool access, spawns sub-agents…

…orchestrates handoffs and maintains memory is becoming more important than the model it wraps.

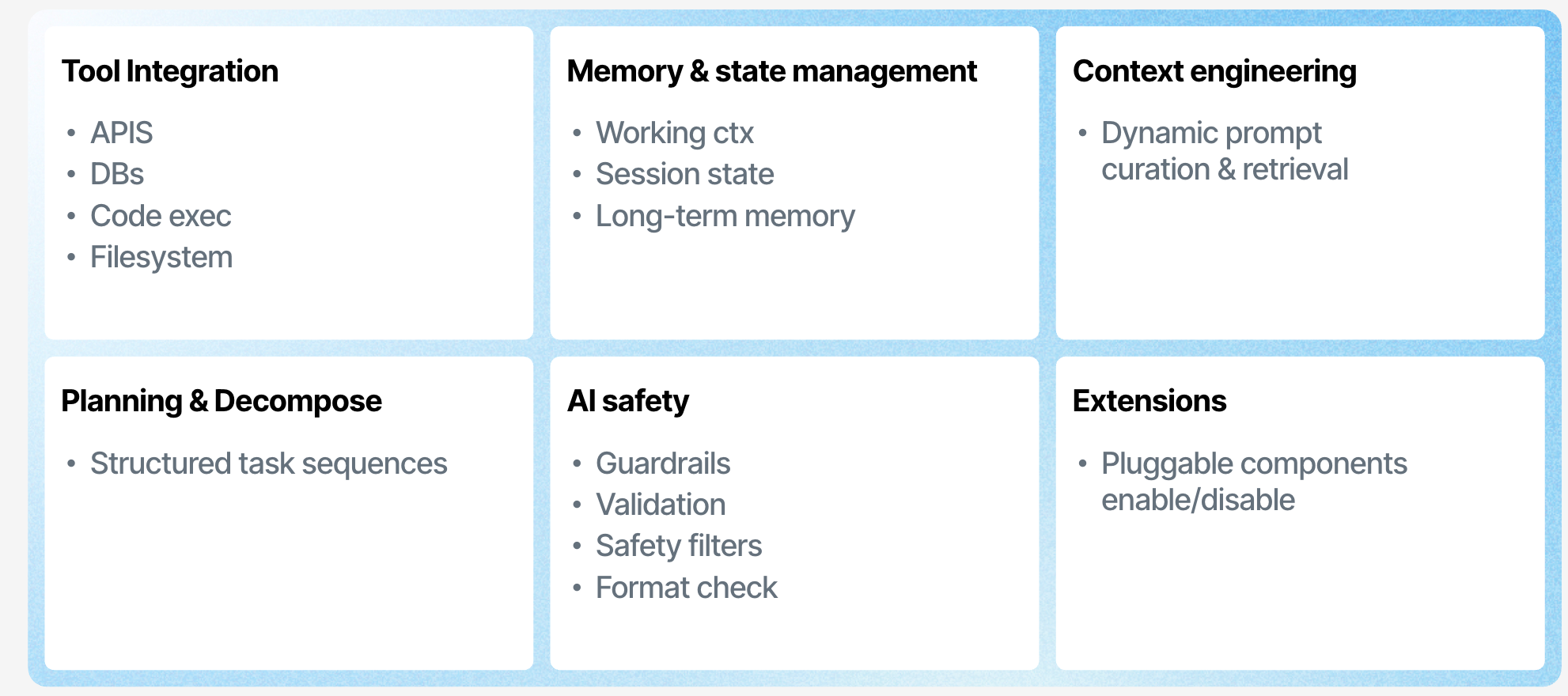

Think about what a production multi-agent system actually requires…

A supervisor that triages and routes to specialists, delegation chains where context flows through handoffs without dropping identity or permissions.

Agent loops with hard limits on iterations and token spend, cross-channel continuity so a user who starts on web chat can resume on WhatsApp.

None of this is a model problem. It’s all runtime.

As I noted in before, hybrids combining structured workflows and modular skills are emerging as the practical path forward.

But the question is whether each team should be building those hybrids independently, or whether the patterns are mature enough to be platform primitives.

When AI builds agents

Here’s where it gets interesting…

The same AI that powers agents is now capable of building them.

But the effectiveness of AI-generated agent code depends entirely on what it generates into.

When AI generates into an unbounded space, raw orchestration code with infinite implementation choices, the output is high-variance and requires deep review.

When it generates into a structured, constrained domain, a language with named constructs for the exact concepts agent developers work with, its reasoning concentrates on the right problem: what should the agent do?

I touched on this, AI agents eliminate rigid dependencies by dynamically creating solutions.

But that dynamism needs a target … a domain-specific surface that validates at compile time and enforces at execution time.

.webp)